tag

home / developersection / tag

Input Splits and Key-Value Terminologies for MapReduce

As we already know that in Hadoop, files are composed of individual records, which are ultimately processed one-by-one by mapper tasks.

Log Data Ingestion with Flume

Some amount of data volume that ends up in HDFS might land there through database load operations or other types of batch processes.

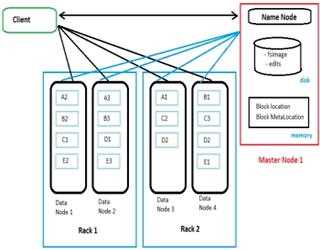

Name Node Design and its working in HDFS

Whenever a user tries to stores a file in HDFS, the file is first break down into data blocks, and three replicas of these data blocks are stored in slave nodes (data nodes) throughout the Hadoop cluster

Check pointing process in HDFS

As we already know now that HDFS is a journaled file system, where new changes to files in HDFS are captured in an edit log that’s stored on the NameNode in a file named edits.

Concept of Data compression in Hadoop

The massive data volumes that are very command in a typical Hadoop deployment make compression a necessity.

Importance of Map Reduce in Hadoop

From the beginning of the Hadoop’s history, MapReduce has been the complete game changer in town when it comes to deal with data processing.

Slave Node Server Design for HDFS

When we are choosing storage options, consider the impact of using commodity drives rather than more expensive enterprise-quality drives.

How to Choose the Right Hadoop Distribution?

Commercially available distributions of Hadoop offer different combinations of open source components from the Apache Software Foundation and from several other places

Hadoop Tools: Amazon Services

A number of companies offer tools designed to help you get the most out of your Hadoop implementation. Here’s a sampling:

Concept of Key- Value Pair Data in Hadoop MapReduce

First of all, let’s just clarify about what do we meant by saying “key-value” pairs by understanding similar concepts in the Java standard API.

Apache Hadoop Eco-system

There are several other open source components that are typically seen in a Hadoop deployment.

Hadoop Distributed File System (HDFS)

HDFS is a file system unlike most of us may have encountered before. It is not a POSIX compliant file system, which basically means it does not provide the same guarantees as a regular file system.