Non-Blocking Synchronization in C#

The .NET frameworks introduced ‘Non-Blocking Synchronization’ for highly concurrent and performance-critical scenarios. It constructs to perform operations without ever blocking, pausing or waiting.

Writing a non-blocking or lock-free multithreaded code properly is a little bit tough. We must think and decide carefully whether we really need the performance benefits before dismissing ordinary locks.

The non-blocking approaches work across multiple processes.

In C#, we are very careful to ensure that such optimizations don’t multi-threaded code that makes proper use of locks.

According to this situation, you must explicitly optimize your code by creating memory barriers (also called memory fences) to limit the effects of instruction reordering and read/write caching.

The Memory Barrier (full fence) will prevent any kind of instruction reordering or caching around that fence. By calling Thread.MemoryBarrier generates a full fence.

For example:

class MemoryBarriersEx

{

int _answer;

bool _finished;

void Method1()

{

_answer = 100;

_finished = true;

}

void Method2()

{

if (_finished) Console.WriteLine(_answer);

}

}

If methods Method1 and Method2 run concurrently on different threads, it might be possible for Method2 to write “0” in –answer fields.

So, we need to create memory barriers to limit the effects of instruction reordering and read/write caching by other threads. We can fix our example by applying four full fences as follows:

class MemoryBarriersEx

{

int _answer;

bool _finished;

void Method1()

{

_answer = 100;

Thread.MemoryBarrier(); // Barrier 1

_finished = true;

Thread.MemoryBarrier(); // Barrier 2

}

void Method2 ()

{

Thread.MemoryBarrier(); // Barrier 3

if (_finished)

{

Thread.MemoryBarrier(); // Barrier 4

Console.WriteLine (_answer);

}

}

}

Here, The Barriers 1 and 4 preventing writing “0”. Barriers 2 and 3 insuring for unchanged fields guarantee: they ensure that if Method2 ran after Method1, reading _finished would evaluate to True.

A good approach is to start by putting memory barriers before and after every instruction that reads or writes a shared field. If you are uncertain of any, leave them and switch back to using locks.

Another way to solve this problem is to apply the volatile keyword to the _finished field.

volatile bool _finished;

The volatile keyword instructs the compiler to generate an acquire barrier on every read from that field, and a release barriers on every write to that field.

Interlocked: The use of memory barriers is not always enough when reading or writing fields in lock-free code in a multi-threaded environment. When we are working on 64-bit fields, increments, and decrements, it requires a heavier approach. That’s why Microsoft introduced an Interlocked helper class. It provides the Exchange and CompareExchange methods that enabling lock-free read-modify-write operations, with a little additional coding.

Signaling with Wait and Pulse:

Signaling constructs the Monitor class, via the static methods Wait and Pulse (and PulseAll). The approach is that we write the signaling logic by ourselves using custom flags and fields (within lock statements), and then introduce Wait and Pulse commands to prevent spinning. By the use just these methods and the lock statement, you can achieve the functionality of AutoResetEvent, ManualResetEvent, and Semaphore.

Barrier Class:

Another way to signaling constructs is by creating a Barrier class that allows many threads to rendezvous at a point in time. These classes are very fast and efficient and are built upon Wait, Pulse, and spinlocks.

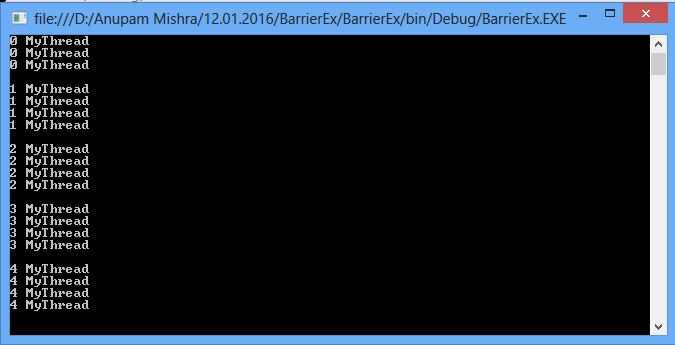

In the following example, each of three threads writes the numbers 0 through 4, while keeping in step with the other threads:

using System;

using System.Threading;

namespace BarrierEx

{

class Program

{

static Barrier _barrier = new Barrier(4, barrier => Console.WriteLine());

static void Main(string[] args)

{

Thread t1= new Thread(DemoMethod);

new Thread(DemoMethod).Start();

new Thread(DemoMethod).Start();

new Thread(DemoMethod).Start();

t1.Start();

Console.ReadKey();

}

static void DemoMethod()

{

Thread.CurrentThread.Name = "MyThread";

for (int i = 0; i < 5; i++)

{

Console.WriteLine(i + " " + Thread.CurrentThread.Name);

_barrier.SignalAndWait();

}

}

}

}

Output:

Reader/Writer Locks:

We define a lock that supports single writers and multiple readers. Reader/Writer Lock is used to synchronize access to a shared resource.

At any given time it allows either concurrent read access for multiple threads or writes access for a single thread.

In a situation, where a resource is changed infrequently a Reader/Writer Lock provides better performance.

Reader/Writer Locks works best when most accesses are read operation, while writes are in a frequent and of short duration

multiple readers alternate with single writes So that neither readers nor writers are blocked for long periods.

For example,

using System;

using System.Diagnostics;

using System.Threading;

class Program

{

static object _locker = new object();

static int _test;

const int _max = 10000000;

static void Main()

{

var s1 = Stopwatch.StartNew();

for (int i = 0; i < _max; i++)

{

lock (_locker)

{

_test++;

}

}

s1.Stop();

var s2 = Stopwatch.StartNew();

for (int i = 0; i < _max; i++)

{

Interlocked.Increment(ref _test);

}

s2.Stop();

Console.WriteLine("Total test records: "+_test);

Console.WriteLine("Total Times taken by Normal Lock: " + s1.Elapsed.TotalMilliseconds);

Console.WriteLine("Total Times taken by Interlocked: " +s2.Elapsed.TotalMilliseconds);

Console.Read();

}

}

Output:

Internally, Reader/Writer Lock is in the form of readers and writers that are in the queue separately.

When a thread releases the writer lock, all reader lock that is already in waiting for the state in the form of a queue is granted to releases one by one.

While a thread in the writer queue is waiting for active reader locks to be released then their request is not granted, even though they could share concurrent access with the existing reader- lock holders.

This helps to protect writers against indefinite blockage by readers. Most methods for acquiring a lock in a Reader/Writer accept time out values. We use timeouts to avoid deadlocks in your applications.

For example, a thread might acquire the writer lock on one resource and request a reader lock on another resource,

at the same instance, another thread might require the writer to lock on the second resource and then request a reader lock on first and then request a reader lock on the first.

In this situation, the thread deadlock occurs. Since we used a timeout.

If the timeout intervals expire and the lock request has not been granted, the method returns control to the calling threads by throwing an Application Exception.

Leave a Comment