In Natural Language Processing (NLP), computers must convert human language into numbers before they can understand or process text. Two popular techniques used for this purpose are TF-IDF and Word2Vec.

While both help machines work with text data, they are fundamentally different in how they represent words and understand meaning.

What is Word2Vec?

Word2Vec is a deep learning–based technique used to convert words into numerical vectors while preserving their meaning and relationships.

It was developed by Google in 2013 to help machines understand context and semantic similarity between words.

In simple terms:

Word2Vec = A method that converts words into meaningful numerical vectors based on context.

Why Do We Need Word2Vec?

Traditional methods like TF-IDF treat words as independent tokens and cannot understand meaning.

For example:

- “King” and “Queen” → Different in TF-IDF

- In Word2Vec → They are recognized as related

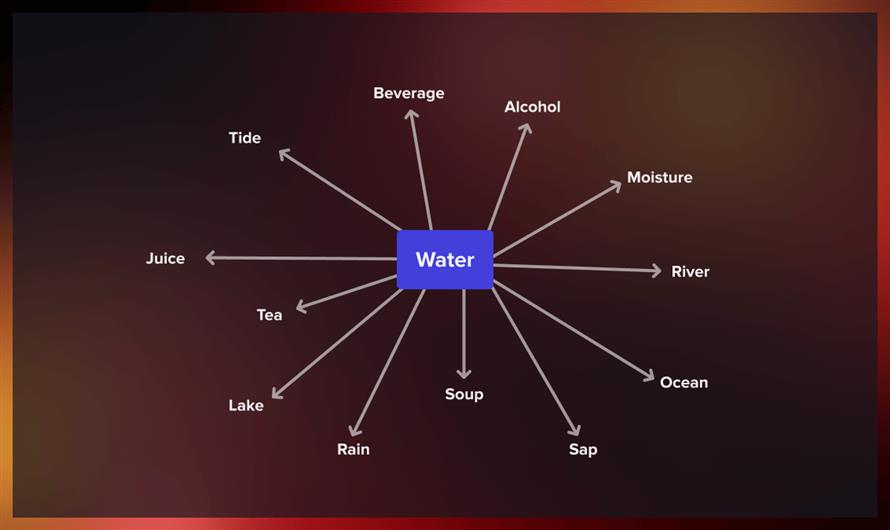

Word2Vec captures:

- Synonyms

- Context

- Relationships

- Word similarity

How Word2Vec Works

Word2Vec uses a neural network trained on large text data to learn word relationships.

The key idea:

Words appearing in similar contexts have similar meanings.

Example

Sentence:

"The cat is sitting on the mat"

Word2Vec learns that:

- cat ≈ dog

- mat ≈ floor

- sitting ≈ resting

Because they appear in similar contexts.

Types of Word2Vec Models

There are two main architectures:

1. CBOW (Continuous Bag of Words)

How it works:

Predicts a word based on surrounding context words.

Example:

Input:

"The ___ is barking"

Model predicts:

"dog"

Features:

- Faster training

- Works well with large datasets

- Good for common words

2. Skip-Gram Model

How it works:

Predicts surrounding words using a given word.

Example:

Input:

"dog"

Output predictions:

barking, pet, animal

Features:

- Better for rare words

- More accurate

- Slower than CBOW

What Makes Word2Vec Powerful?

Word2Vec can even perform word arithmetic:

Example:

- King − Man + Woman = Queen

- This shows it understands semantic relationships.

Advantages of Word2Vec

1. Captures Meaning

- Understands context and semantic similarity.

2. Dense Representation

- Uses compact vectors instead of huge sparse matrices.

3. Handles Synonyms

- Similar words get similar vector values.

4. Improves NLP Accuracy

- Used in modern AI systems.

Limitations of Word2Vec

- Cannot handle unknown words

- Needs large training data

- Context is static (same vector always)

What is TF-IDF? (Quick Recap)

TF-IDF is a statistical method that measures how important a word is in a document compared to a collection of documents.

It focuses on:

- Frequency of words

- Rarity across documents

- But it does NOT understand meaning.

Word2Vec vs TF-IDF (Major Differences)

| Feature | TF-IDF | Word2Vec |

|---|---|---|

| Type | Statistical method | Deep learning model |

| Understands meaning | No | Yes |

| Handles synonyms | No | Yes |

| Context awareness | None | Strong |

| Vector size | Very large (sparse) | Small (dense) |

| Speed | Faster | Slower |

| Training required | No | Yes |

| Use case | Keyword ranking | Semantic understanding |

Example Comparison

Sentence 1:

"I love dogs"

Sentence 2:

"I like puppies"

TF-IDF Result:

- Low similarity (different words)

Word2Vec Result:

- High similarity (same meaning)

When to Use TF-IDF vs Word2Vec

Use TF-IDF When:

- Keyword extraction

- Simple search engines

- Small datasets

- Fast processing needed

Use Word2Vec When:

- Semantic search

- Chatbots

- Recommendation systems

- Text similarity tasks

- AI/NLP applications

Real-World Applications of Word2Vec

- Search engines

- Voice assistants

- Machine translation

- Sentiment analysis

- Spam detection

- Recommendation systems

Key Takeaway

- TF-IDF counts words.

- Word2Vec understands words.

- TF-IDF = Importance of words

- Word2Vec = Meaning of words

Conclusion

Word2Vec revolutionized NLP by enabling machines to understand relationships between words rather than just counting them. While TF-IDF remains useful for simple tasks, modern AI systems rely heavily on Word2Vec and similar embedding techniques for deeper semantic understanding.

Leave a Comment