Sentence Embeddings are numerical vector representations of entire sentences that capture their meaning, context, and semantics.

In simple words:

Sentence Embeddings = Converting a full sentence into numbers that represent its meaning.

Why Do We Need Sentence Embeddings?

Computers cannot understand text directly. They need numbers.

Earlier methods like:

- Bag of Words

- TF-IDF

- Word2Vec

only worked at the word level. But real language meaning comes from complete sentences, not individual words.

That’s why sentence embeddings were introduced.

Simple Example

Sentence 1:

“I love eating pizza.”

Sentence 2:

“I enjoy having pizza.”

Though words differ, meaning is the same.

Sentence embeddings convert both into very similar vectors, so AI knows they mean the same thing.

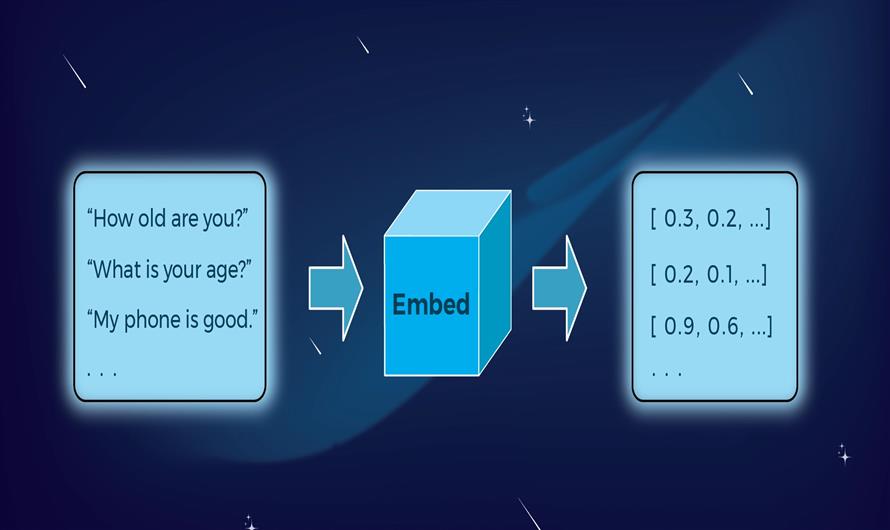

How Sentence Embeddings Work

AI models convert a sentence into a fixed-length vector (list of numbers).

Example:

"I love AI" →

[0.21, -0.34, 0.89, 0.12, …]These numbers capture:

- Meaning

- Context

- Grammar relationships

Key Idea Behind Sentence Embeddings

Sentences with similar meanings have vectors that are close together.

Similarity is measured using:

- Cosine similarity (most common)

- Euclidean distance

How Sentence Embeddings Are Created

They are generated using deep learning models trained on large text data.

Common techniques include:

1. Averaging Word Embeddings

Old method: Average vectors of individual words.

Simple but less accurate.

2. Neural Network Models (Modern Method)

These models understand full context:

- Transformer models

- Deep contextual embeddings

They consider:

- Word order

- Context

- Grammar

- Sentence structure

Example of Sentence Similarity

Query:

“How to lose weight?”

Sentence embeddings can match with:

- “Tips for reducing body fat”

- “Best ways to slim down”

Even though no words match exactly.

Types of Embeddings (By Level)

| Level | What it Represents |

|---|---|

| Word Embeddings | Individual words |

| Sentence Embeddings | Full sentence meaning |

| Document Embeddings | Entire paragraphs/documents |

Where Sentence Embeddings Are Used

Very common in modern AI systems:

1. Semantic Search

- Google-style search understanding meaning.

2. Chatbots & AI Assistants

- Matching user intent with best responses.

3. Text Similarity Detection

- Used in plagiarism detection and recommendations.

4. Question Answering Systems

- Finding best answer based on meaning.

5. Recommendation Systems

- Matching similar content.

Sentence Embeddings vs Word Embeddings

| Feature | Word Embeddings | Sentence Embeddings |

|---|---|---|

| Unit | Single word | Full sentence |

| Captures context | Limited | Strong |

| Meaning accuracy | Medium | High |

| Used for | Word similarity | Semantic matching |

Simple Analogy

Think of embeddings like GPS coordinates for meaning:

- Words = locations of buildings

- Sentences = locations of cities

Two cities close on the map → similar meaning.

One-Line Definition

Sentence Embeddings convert entire sentences into numerical vectors that represent their meaning.

Leave a Comment