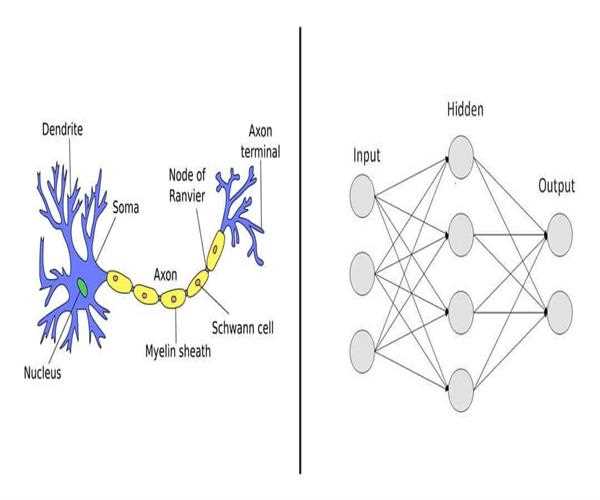

Biological Neurons Vs Artificial Neurons?

- Biological neurons are the basic building blocks of the neurological system, whereas the artificial neurons are the neuron, also referred to as a perceptron, is a mathematical model used in artificial neural networks.

Although it functions in a computational environment, it mimics the way that biological neurons behave. - Biological neurons uses chemical messages and electrical impulses whereas artificial neurons uses mathematical trained model.

Neural Networks in AI

1. Basic Structure:

Neural network can be defined as a layers of interconnected nodes, or neurons.

There are the three types of layers in this network:

- Input layer: Data is received by the input layer and then it is forwarded to the hidden layer

- Hidden Layers: Receives the data from the input layer and then after recognize patterns by processing data.

- Output layer: Receives the processed result from hidden layer and generates the final product

2. Forward Propagation:

- Information travels from the input layer to the output layer via hidden layers.

- Each neuron uses the activation function, weights, and biases to process information.

- These calculations are what produce the outcome.

3. Activation Functions:

- By introducing nonlinearity, these functions enable NNs to pick up intricate patterns.

- Typical activation mechanisms:

1. Sigmoid: Converts inputs into a 0–1 range.

2. ReLU, or Rectified Linear Unit, outputs 0 otherwise and the input if it is affirmative.

3. The hyperbolic tangent, or tanh, maps inputs to a range of -1 to 1.

4. Backpropagation:

- The network modifies its weights and biases while it is being trained.

- Errors in the expected and actual outputs spread in a backward direction.

- For better performance, the network iteratively adjusts its settings.

5. Training:

- Neural Networks are trained using labeled data, or pairings of input and output.

- Prediction error is measured by loss functions (e.g., mean squared error).

- Weights are changed by optimization methods, such as gradient descent, in order to reduce loss.

6. Uniqueness and Deep Learning:

- Deep Learning: Multi-layered neural networks are the deep architectures and directly implements deep learning.

- Originality or Feature extraction: From unprocessed data, Neural Networks automatically extract pertinent features and learn from it automatically.

- Generalization: They adapt easily to new situations.

- End-to-End Learning: Without the need for human feature engineering, Neural Networks learn straight from input to output.

8. Uses:

- Image Recognition: Neural Networks are quite good at recognizing things in pictures.

- RNNs are used in Natural Language Processing (NLP) to process sequences, such as text and speech.

- They also play a vital role in the field of Finance, healthcare, recommendation systems, and other areas.

Conclusion:

Neural networks can be recalled as the connected puzzle pieces that all contribute to the larger image of artificial intelligence.

Leave a Comment